Data Engineering Playbook — Part 7: DataOps & Team Operations Playbook (Series Finale)

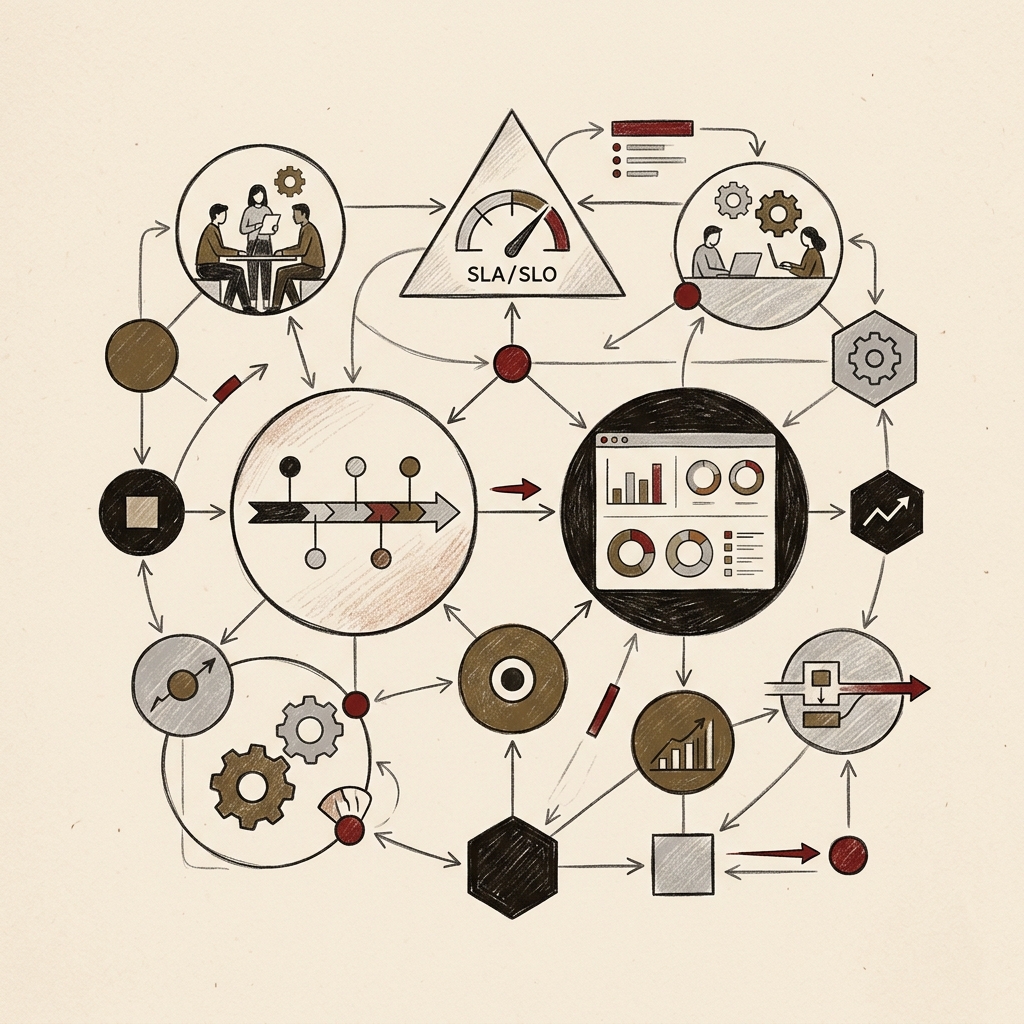

Running pipelines as trustworthy data products requires DataOps culture and a team operating system. This guide covers the five DataOps principles with CI/CD automation, team structure design by scale, quantifying reliability with SLA/SLO/SLI and error budgets, systematizing incident response with on-call rotations, runbooks, and postmortems, and safe data publishing with the Write-Audit-Publish pattern — with production-ready code throughout. The final installment of the 7-part series.

Series Overview

- Part 1 — Overview & 2026 Key Trends (published)

- Part 2 — Data Architecture Design (published)

- Part 3 — Building Data Pipelines (published)

- Part 4 — Data Quality & Governance (published)

- Part 5 — Cloud & Infrastructure (FinOps, IaC) (published)

- Part 6 — AI-Native Data Engineering (published)

- Part 7 — DataOps & Team Operations Playbook (current · series finale)

Table of Contents

- DataOps — Running Pipelines as Products

- Data Team Structure Design

- Data SLA / SLO / SLI Framework

- On-Call Operations Playbook

- Runbook Writing Guide

- Write-Audit-Publish (WAP) Pattern

- Data Engineer Career Path & Skills Roadmap

- 2026–2028 Future Outlook

- Playbook Summary — Prioritization Framework for Your Team

1. DataOps — Running Pipelines as Products

What Is DataOps?

DataOps applies DevOps principles to the world of data. It is an operating philosophy where data engineers, data scientists, analysts, and business stakeholders collaborate without silos — treating pipelines like code, managing them under version control, and using automation to achieve quality and velocity at the same time.

The core message is simple: "Run data pipelines not as ad-hoc scripts, but as trustworthy products."

Organizations that adopt a DataOps culture have reduced operational overhead by 20–25% through automation and reuse. Data engineers evolve from pipeline plumbers into platform stewards who shape architecture and strategy.

The Five Core Principles of DataOps

DataOps Maturity Model

Level 0 — Chaos

- Pipelines are a collection of scripts. Nobody knows who owns what.

- Incidents turn into blame-finding exercises. No documentation.

Level 1 — Repeatable

- Pipeline code stored in Git.

- Basic tests and alerts exist. On-call rotation started.

Level 2 — Defined

- CI/CD automated. Data contracts beginning to be applied.

- SLAs defined. Data catalog being built.

Level 3 — Managed

- Quality SLA monitoring across all pipelines.

- Cost attribution implemented. Automated drift detection.

- Complete runbooks. Postmortem culture established.

Level 4 — Optimizing

- AI-driven anomaly detection. Agentic self-healing beginning.

- Data product-level operations. Data literacy spreading company-wide.

DataOps CI/CD — Automating Pipeline Deployments

# .github/workflows/dataops_pipeline.yml

# Fully automated data pipeline CI/CD

name: DataOps Pipeline CI/CD

on:

pull_request:

paths: ['models/**', 'tests/**', 'pipelines/**']

push:

branches: [main]

jobs:

# Stage 1: Code quality checks

lint_and_format:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: SQL format check (sqlfluff)

run: sqlfluff lint models/ --dialect snowflake

- name: Python lint (ruff)

run: ruff check pipelines/

# Stage 2: dbt model tests

dbt_test:

needs: lint_and_format

runs-on: ubuntu-latest

steps:

- name: dbt build & test (CI schema)

run: |

dbt deps

dbt build --target ci \

--select state:modified+ \

--defer

env:

DBT_SNOWFLAKE_ACCOUNT: ${{ secrets.DBT_SNOWFLAKE_ACCOUNT }}

- name: Data contract validation

run: python scripts/validate_contracts.py --changed-models

# Stage 3: Integration tests

integration_test:

needs: dbt_test

runs-on: ubuntu-latest

if: github.event_name == 'pull_request'

steps:

- name: Quality gate check (Great Expectations)

run: great_expectations checkpoint run nightly_quality_check

# Stage 4: Production deploy

deploy_production:

needs: [dbt_test, integration_test]

runs-on: ubuntu-latest

if: github.ref == 'refs/heads/main'

environment: production

steps:

- name: dbt production deploy

run: dbt run --target prod --select state:modified+

- name: Deploy notification (Slack)

run: |

curl -X POST ${{ secrets.SLACK_WEBHOOK }} \

-d '{"text":"Data pipeline deployed: ${{ github.event.head_commit.message }}"}'

2. Data Team Structure Design

How Team Structure Evolves with Scale

There is no universal right answer. The best structure is the one that fits your current team size and maturity.

Small Team (1–5 people) — Full-Stack Data Engineers

[Data Engineer (1-3 people)]

- Handles ingestion + transformation + pipelines + infrastructure

- Tools: dbt + Airflow + single cloud (Snowflake or BigQuery)

- Priority: Fast value delivery > perfect architecture

Mid-Sized Team (5–20 people) — Role Specialization Begins

[Data Platform Engineer (2-4 people)]

- Infrastructure, IaC, shared platform components

[Analytics Engineer (2-4 people)]

- dbt modeling, BI integration, business logic implementation

[Data Engineer (2-4 people)]

- Ingestion pipelines, streaming, batch ETL

[ML Engineer / MLOps (1-2 people)]

- Feature Store, model serving pipelines

Large Team (20+ people) — Domain-Based Distribution

[Central Data Platform Team] — shared infrastructure, governance

- Platform Engineer, DataGovOps, FinOps

[Domain-Embedded Data Engineers]

- Commerce domain: order/payment pipelines

- Marketing domain: campaign/funnel pipelines

- ML Platform Team: Feature Store, model pipelines

Core Role Definitions

| Role | Core Responsibility | Required Skills |

|---|---|---|

| Data Engineer | Design, build, and operate pipelines | Python, SQL, Spark, Airflow |

| Analytics Engineer | dbt transformations, business metric definitions | SQL, dbt, business domain knowledge |

| Data Platform Engineer | Shared infrastructure, IaC, platform components | Terraform, Kubernetes, cloud |

| MLOps Engineer | ML pipelines, Feature Store, model serving | Python, MLflow, Kubernetes |

| Data Architect | Company-wide data architecture design and standards | Broad technical depth + business perspective |

Onboarding — Getting a New Team Member Productive in 30 Days

Week 1: Environment Orientation

- Set up cloud accounts and tool access

- Fully understand one core pipeline DAG

- Read one runbook

- Explore the data catalog

Week 2: First Contributions

- Add one dbt test to an existing pipeline

- Write one data contract

- Shadow an on-call engineer once

Weeks 3-4: Independent Work

- Build one medium-complexity pipeline independently

- Conduct 2-3 PR reviews

- Contribute to writing one runbook

3. Data SLA / SLO / SLI Framework

Understanding the Three Concepts

These three concepts form a hierarchy. You measure SLIs, set SLOs as internal targets, and promise SLAs to stakeholders. SLOs must always be stricter than SLAs — the gap between them is your error budget.

Data Pipeline SLI Definitions

# sli_definitions.py

# Data pipeline SLI definitions and automated measurement

from dataclasses import dataclass

from typing import Callable

@dataclass

class DataSLI:

name: str

description: str

unit: str

measurement_fn: Callable

owner: str

# Freshness

freshness_sli = DataSLI(

name="data_freshness_hours",

description="Hours elapsed since last successful refresh",

unit="hours",

measurement_fn=lambda table: measure_freshness(table),

owner="data-platform@company.com"

)

# Completeness

completeness_sli = DataSLI(

name="completeness_rate_pct",

description="Percentage of required columns that are not NULL",

unit="percent",

measurement_fn=lambda table: measure_completeness(table),

owner="data-platform@company.com"

)

# Volume Normality

volume_sli = DataSLI(

name="daily_row_volume_zscore",

description="Z-score of today's row count vs. 7-day average",

unit="z-score",

measurement_fn=lambda table: measure_volume_anomaly(table),

owner="data-platform@company.com"

)

# Pipeline Success Rate

pipeline_sli = DataSLI(

name="pipeline_success_rate_7d",

description="Pipeline run success rate over the last 7 days",

unit="percent",

measurement_fn=lambda dag_id: measure_pipeline_success(dag_id),

owner="data-platform@company.com"

)

SLO Definitions by Tier

# slo_definitions.yaml

slos:

# Tier 1: Business Critical (KPIs, Finance, AI Training Data)

- name: "fct_daily_revenue"

tier: 1

freshness:

slo: "< 2 hours"

sla: "< 4 hours"

completeness:

slo: "> 99.9%"

sla: "> 99.5%"

volume_anomaly:

slo: "Z-score < 3"

sla: "Z-score < 4"

pipeline_success_rate:

slo: "> 99.5% (7-day rolling)"

sla: "> 99.0% (30-day rolling)"

error_budget_monthly: "0.5% = 3.6 hours/month"

on_call_severity: "P1 — Immediate response"

# Tier 2: Operational Analytics (Marketing, Operations Dashboards)

- name: "marketing_attribution"

tier: 2

freshness:

slo: "< 8 hours"

sla: "< 12 hours"

pipeline_success_rate:

slo: "> 99.0%"

sla: "> 98.0%"

on_call_severity: "P2 — Business hours response"

# Tier 3: Exploratory Analysis

- name: "raw_event_logs"

tier: 3

freshness:

slo: "< 24 hours"

sla: "< 48 hours"

on_call_severity: "P3 — Next business day response"

Error Budget Calculator

def calculate_error_budget(slo_percent: float, period_days: int = 30) -> dict:

"""

Calculate error budget from SLO percentage.

Example: SLO 99.5%, 30 days -> error budget = 3.6 hours/month

"""

error_budget_pct = 100 - slo_percent

total_minutes = period_days * 24 * 60

error_budget_minutes = total_minutes * (error_budget_pct / 100)

return {

"slo_percent": slo_percent,

"error_budget_percent": round(error_budget_pct, 3),

"error_budget_minutes": round(error_budget_minutes, 1),

"error_budget_hours": round(error_budget_minutes / 60, 2),

"period_days": period_days

}

# SLO 99.9%, 30 days -> error budget 0.72 hours

print(calculate_error_budget(99.9, 30))

# {'slo_percent': 99.9, 'error_budget_percent': 0.1,

# 'error_budget_minutes': 43.2, 'error_budget_hours': 0.72, 'period_days': 30}

# SLO 99.5%, 30 days -> error budget 3.6 hours

print(calculate_error_budget(99.5, 30))

# {'error_budget_hours': 3.6, ...}

4. On-Call Operations Playbook

What Is On-Call?

On-call is the practice of monitoring data pipeline health around the clock and responding immediately to failures. Borrowed from SRE (Site Reliability Engineering) culture, it is essential for maintaining data platform reliability.

On-call is not a system for overworking engineers. A well-designed on-call rotation naturally leads the entire team to understand their systems more deeply and build more resilient pipelines.

On-Call Design Principles

① Rotation: No one should be on-call more than once per week

→ Prevents burnout, promotes knowledge sharing

② Escalation layers: Primary → Secondary → Domain expert → Manager

→ Auto-escalate if primary cannot resolve within 30 minutes

③ Prevent alert fatigue: Every alert must be Actionable

→ Immediately remove or downgrade non-actionable alerts

④ On-call compensation: Clear compensation policy for nights/weekends

→ Comp time or on-call allowance

⑤ Post-incident review (Postmortem): All P1 incidents documented within 48 hours

→ Blameless culture

Incident Response Flow

Postmortem Template

# Incident Postmortem: [Pipeline Name] [Date]

## Summary

- Severity: P1 / P2 / P3

- Duration: YYYY-MM-DD HH:MM - HH:MM (N hours N minutes)

- Affected scope: fct_orders, Revenue Dashboard, X ML model

- Root cause: (one-line summary)

## Timeline

| Time | Event |

|-------|----------------------------------------------|

| 06:00 | Pipeline failure alert received |

| 06:05 | On-call engineer begins triage |

| 06:20 | Source DB schema change identified |

| 06:45 | Pipeline patched and re-run |

| 07:10 | All downstream systems verified |

## Root Cause Analysis (5-Why)

1. Why did the pipeline fail?

→ Source table user_id column type changed from INT to VARCHAR

2. Why was this change not detected in advance?

→ No schema change policy in the data contract with the source team

3. Why was the data contract incomplete?

→ Breaking Change definition was missing at contract creation time

## Action Items

| Action | Owner | Deadline |

|------------------------------------------------|-------|------------|

| Add schema change policy to data contract | Kim | 2026-04-26 |

| Add automated source schema drift monitor | Lee | 2026-05-03 |

| Agree on Breaking Change process with source team | Park | 2026-05-10 |

## Blameless Retrospective

- What went well: Quick impact assessment, clear communication

- What to improve: Automate schema change monitoring

5. Runbook Writing Guide

A runbook should be written so that "an engineer encountering it at 3 AM for the first time can resolve the problem." A good runbook presents the complete path from initial diagnosis to resolution when an alert fires. It ensures consistent incident response regardless of who is on-call, and helps new team members respond effectively from day one.

Standard Runbook Structure

A runbook consists of five sections.

Header: Basic Information

Pipeline: daily_orders_pipeline

Owner: Data Platform Team / data-platform@company.com

SLA: Refreshed before 7 AM daily

Related dashboards: Revenue Dashboard, Inventory Dashboard

Last updated: 2026-04-19

Alert Response: "daily_orders_pipeline FAILED"

Step 1. Open the Airflow UI, find the failed task, and review its logs.

Step 2. Check for source DB connectivity errors.

python scripts/check_source_connection.py --source orders_db

# On connection failure: escalate to DBA on-call team

Step 3. Check for schema changes.

-- Check source table schema in Snowflake

SELECT column_name, data_type

FROM information_schema.columns

WHERE table_name = 'ORDERS'

ORDER BY ordinal_position;

-- If schema change found: fix dbt model and re-run

Step 4. Check for data volume anomalies.

SELECT COUNT(*), MAX(ordered_at)

FROM raw.orders

WHERE ordered_at::date = CURRENT_DATE - 1;

-- If 0 rows: source system issue → escalate to source team

Step 5. Manual re-run.

airflow dags trigger daily_orders_pipeline \

--conf '{"execution_date": "2026-04-19"}'

Alert Response: "fct_orders FRESHNESS EXCEEDED 4h"

- Check the last successful run time in Airflow

- Check whether any runs are queued or stuck

- Check the status of the upstream pipeline (stg_orders)

dbt run --select fct_orders+ --target prod

dbt test --select fct_orders

Escalation Contacts

| Situation | Escalation Target | Contact Method |

|---|---|---|

| Source DB outage | DBA on-call | PagerDuty escalation |

| Snowflake outage | Snowflake Support | support.snowflake.com |

| Business-critical data missing | Data team lead | Direct call |

6. Write-Audit-Publish (WAP) Pattern

The Write-Audit-Publish (WAP) pattern processes data and runs an audit before publication, guaranteeing that consumers always access only validated data.

# WAP pattern implementation (Apache Iceberg Branch)

import datetime

from pyiceberg.catalog import load_catalog

def write_audit_publish(

data_df,

table_name: str,

quality_checks: list

) -> bool:

"""Publish data safely using the WAP pattern"""

catalog = load_catalog("default")

table = catalog.load_table(table_name)

# WRITE: first write to audit branch

audit_branch = f"audit-{datetime.date.today().isoformat()}"

table.manage_snapshots().create_branch(audit_branch).commit()

with table.transaction() as tx:

tx.set_branch(audit_branch)

tx.overwrite(data_df)

print(f"Write complete: {len(data_df):,} rows written to branch {audit_branch}")

# AUDIT: run quality checks

all_passed = True

for check in quality_checks:

result = check(table.scan(branch=audit_branch).to_pandas())

if not result.passed:

print(f"Audit failed: {check.__name__} — {result.message}")

all_passed = False

if not all_passed:

table.manage_snapshots().remove_branch(audit_branch).commit()

send_alert(f"WAP audit failed: {table_name}")

return False

# PUBLISH: atomically merge to main branch

table.manage_snapshots().fast_forward(

branch="main",

to=audit_branch

).commit()

print(f"Publish complete: {table_name} main branch updated")

return True

7. Data Engineer Career Path & Skills Roadmap

Career Levels

Junior (0-2 years)

- Core goal: Master SQL, Python, and cloud fundamentals

- Tech stack: Python, SQL, pandas, dbt basics, single cloud intro

- Work: Maintain existing pipelines, add simple dbt models

- Salary (US): $90,000 - $110,000

- Growth signal: Can answer "why does this table look like this?" independently

Mid-Level (2-5 years)

- Core goal: Design, build, and operate pipelines independently

- Tech stack: Spark/Flink, Kafka, Terraform, Airflow, cloud specialization

- Work: Design complex pipelines independently, lead on-call, mentor juniors

- Salary (US): $120,000 - $145,000

- Growth signal: Can form and defend architecture decisions

Senior (5-10 years)

- Core goal: Shape the team's technical direction, platform-level design

- Tech stack: Broad technical depth + business context + FinOps + governance

- Work: Define technical standards, architecture reviews, team capability development

- Salary (US): $150,000 - $175,000+

- Growth signal: Asks "how will my decision affect the team next year?" first

Staff / Principal (10+ years)

- Core goal: Design the organization's overall data strategy

- Role: Data Architect, Engineering Manager, CDO track

- Salary (US): $180,000 - $220,000+

2026–2027 Skills Roadmap — Phase by Phase

Phase 1 (months 0-3): Build the Foundation

✅ Advanced SQL (window functions, CTEs, query optimization)

✅ Python fundamentals (pandas, PEP8, writing tests)

✅ Git version control

✅ Single cloud fundamentals (AWS or GCP or Azure)

✅ Complete your first dbt project

Phase 2 (months 3-9): Pipeline Specialization

✅ Write and operate Apache Airflow DAGs

✅ Advanced dbt (incremental models, macros, packages)

✅ Automate data quality checks (Great Expectations or Soda)

✅ Docker + container fundamentals

✅ Kafka or Kinesis streaming basics

Phase 3 (months 9-18): Architecture Skills

✅ Apache Spark / PySpark in production

✅ Lakehouse architecture (Iceberg + Delta)

✅ Terraform IaC in production

✅ Cloud data platform cost optimization

✅ Data contracts & governance implementation

Phase 4 (18+ months): Specialization & Leadership

[Track A: AI/ML Specialization]

✅ Feature Store design (Feast/Tecton)

✅ MLOps pipelines (MLflow + Kubeflow)

✅ RAG pipeline construction

✅ LLM fine-tuning data processing

[Track B: Platform Engineering Specialization]

✅ Advanced Kubernetes operations

✅ Data platform SRE practices

✅ FinOps leadership

✅ Multi-cloud architecture

Recommended Learning Resources & Certifications

| Area | Resources |

|---|---|

| SQL | Mode Analytics SQL Tutorial, Advanced SQL for Data Scientists |

| dbt | dbt Learn (official), dbt Fundamentals Certification |

| AWS | AWS Certified Data Engineer – Associate (DEA-C01) |

| GCP | Google Professional Data Engineer |

| Spark | Databricks Certified Associate Developer for Apache Spark |

| Kafka | Confluent Certified Developer for Apache Kafka |

| Community | dbt Slack, Data Engineering Weekly, Seattle Data Guy (YouTube) |

8. 2026–2028 Future Outlook

Three Certain Changes

① The Boundary Between AI and Data Engineering Disappears

The line between data engineering and ML engineering is blurring rapidly. The "AI-Native data engineer" who handles feature pipelines, RAG infrastructure, and agentic pipelines is becoming the standard. MLOps engineers who are fluent in both data architecture and AI model deployment are in high demand. Organizations are realizing that trustworthy AI requires a solid data engineering foundation underneath it.

② Agentic Automation Deepens

By 2027, most routine pipeline maintenance will be handled by AI agents. The data engineer's role shifts toward supervising agents, making strategic decisions, and solving complex problems that agents cannot.

③ Data Product-Centric Operations Become Standard

Large enterprises are standardizing on organizing teams and managing SLAs around data products rather than individual pipelines. Each data product has a defined consumer, a quality SLA, version history, and documentation.

What Stays the Same for Data Engineers

Tools change. The fundamentals do not.

Values that remain constant from 2026 to 2030:

1. Data reliability — "Can I trust this number?"

2. Business context — "What decision will this data drive?"

3. Simplicity — "Only as complex as necessary"

4. Ownership — "I'm the first to know when my pipeline breaks"

5. Learning agility — "I don't fear new tools; I understand them through first principles"

9. Playbook Summary — Prioritization Framework for Your Team

Trying to apply all seven parts at once leads to applying none of them. Diagnose where your team is today, and start with the highest-impact change.

30-Minute Team Diagnosis Checklist

What is your biggest pain point right now?

□ You frequently hear "this data is wrong"

→ Start with Part 4 (Data Quality & Governance)

→ Starting point: add dbt tests, write one data contract

□ Pipelines break often and recovery takes too long

→ Start with Part 3 (Pipeline Reliability)

→ Starting point: ensure idempotency, set up alerts, write one runbook

□ Cloud costs are higher than expected

→ Start with Part 5 (FinOps)

→ Starting point: tagging standards, Snowflake auto-suspend, S3 lifecycle policies

□ ML models cannot reach production

→ Start with Part 6 (MLOps)

→ Starting point: MLflow experiment tracking, Feature Store PoC

□ "Who owns this data?" is unclear

→ Start with Part 4 (Governance)

→ Starting point: data owner RACI matrix, data catalog pilot

□ Infrastructure is still being provisioned manually

→ Start with Part 5 (IaC)

→ Starting point: codify 3 core resources with Terraform

Priority Roadmap by Maturity Stage

Early Stage (months 0-6): Build the Foundation

1. Version-control all pipeline code in Git

2. Introduce an orchestrator: Airflow or Prefect

3. Start codifying the transformation layer in dbt

4. Define SLAs for 3 key tables

5. Start on-call rotation (minimum 2 people)

Growing Stage (months 6-18): Embed Quality

1. Apply data contracts to 10 core tables

2. Automate CI/CD pipelines

3. Data catalog pilot

4. Codify core infrastructure as IaC

5. Build a FinOps dashboard

Mature Stage (18+ months): Optimize & Scale

1. Full DataGovOps automation

2. Build AI/ML data infrastructure

3. Experiment with agentic self-healing pipelines

4. Evaluate Data Mesh adoption

5. Company-wide data literacy program

Closing — Completing the Playbook

This has been a long journey across seven parts.

Part 1 surveyed the landscape of data engineering in 2026. Parts 2 and 3 explored architecture and pipeline construction in depth. Part 4 covered quality and governance — the practices that make data trustworthy. Part 5 confronted the realities of cloud infrastructure and cost. Part 6 addressed the convergence with AI. And this final part showed how people and teams sustain all of it over time.

If there is one message that runs through the entire playbook, it is this:

"Trust before technology. Principles before tools. Correctness before speed."

Pipelines built fast break fast. Trust takes time to build — but once it is there, it accelerates the entire team. When data is trusted, every decision in the organization changes.

The World Economic Forum's Future of Jobs Report 2025 ranked big data specialists as the fastest-growing role in technology — with over 100% projected growth from 2025 to 2030. If you chose this path, you are in the right place.

Now close the playbook, and open your code editor.

References

| Part | Key Sources |

|---|---|

| Parts 1–2 | Binariks, KDnuggets, Monte Carlo Data, Databricks |

| Part 3 | dbt Labs, AWS, ZenML, Kai Waehner |

| Part 4 | Alation, Atlan, OvalEdge, dbt Labs, Acceldata |

| Part 5 | Opsio, CloudZero, calmops.com |

| Part 6 | MLflow, Evidently AI, KDnuggets, Qlik, Databricks |

| Part 7 | lakeFS — WAP Pattern, dbt Labs Blog, Monte Carlo Data Blog, WEF Future of Jobs 2025 |