Data Engineering Playbook — Part 2: Deep Dive into Data Architecture Design

A wrong architecture decision can leave an organization buried in technical debt for years. This post compares the three dominant storage architectures — Data Warehouse, Data Lake, and Data Lakehouse — and breaks down the Medallion pattern alongside the three-way battle of open table formats: Apache Iceberg, Delta Lake, and Apache Hudi. It then examines Lambda vs Kappa processing architectures, the distributed ownership paradigm of Data Mesh (including its four principles and an adoption roadmap), and how it differs from Data Fabric. An architecture decision flowchart and a practical checklist help you derive the right design for your organization today.

Series outline

- Part 1 — Overview & 2026 Key Trends (published)

- Part 2 — Data Architecture Design (this post)

- Part 3 — Building Data Pipelines (ETL/ELT, Orchestration, Streaming) (upcoming)

- Part 4 — Data Quality & Governance (upcoming)

- Part 5 — Cloud & Infrastructure (FinOps, IaC) (upcoming)

- Part 6 — AI-Native Data Engineering (upcoming)

- Part 7 — DataOps & Team Operations Playbook (upcoming)

Table of contents

- Why Does Architecture Choice Matter?

- The Three Major Storage Architectures Compared

- Medallion Architecture — Bronze / Silver / Gold

- Open Table Format Showdown

- Lambda vs Kappa — Choosing a Processing Architecture

- Data Mesh — The Distributed Ownership Paradigm

- Data Fabric vs Data Mesh

- Architecture Decision Flowchart

- Practical Checklist

1. Why Does Architecture Choice Matter?

One wrong architecture decision can saddle an organization with technical debt for years. In 2026, with 81% of IT leaders being asked by C-level executives to cut or freeze cloud costs, designing for the right balance of performance and cost has never been more critical.

Choosing an architecture is not a purely technical decision — it is a strategic choice that simultaneously determines an organization's AI competitiveness, data reliability, and operating costs.

Three key questions to answer before you design:

- What types of data do you need, and how fast must you query them?

- Do you need to support AI/ML workloads?

- What is your team size, and what is your operational maturity?

2. The Three Major Storage Architectures Compared

2-1. Data Warehouse — The Gold Standard for Fast, Reliable BI

A data warehouse is a centralized repository that cleanses and integrates structured data from multiple sources. It follows a Schema-on-Write approach — data is modeled before storage — making it ideal for reporting and business intelligence.

[Source DB] → ETL (cleanse/transform first) → [Warehouse] → BI tools

Advantages

- Fast query performance (indexing, pre-aggregation)

- Strong data quality guarantees (schema enforced on ingest)

- SQL-based, analyst-friendly

- Full ACID transaction support

Disadvantages

- Difficult to handle unstructured or semi-structured data

- Costs escalate sharply as you scale

- Schema changes required whenever ETL pipelines change

- Inefficient for ML/AI workloads

Representative tools: Snowflake, Google BigQuery, Amazon Redshift, Azure Synapse Analytics

Best fit: BI/reporting-heavy workloads on structured data; regulated industries (finance, healthcare) with strict compliance; when query speed and consistency are the top priority

2-2. Data Lake — A Cheap, Flexible Ocean of Raw Data

A data lake stores every type of data in its raw form. It follows a Schema-on-Read approach — the schema is applied at query time. Data is stored in object storage (S3, GCS, ADLS) in formats such as Parquet, Avro, or JSON.

[All sources] → Store raw (no transformation) → [Data Lake] → Process when needed

Advantages

- Stores structured, semi-structured, and unstructured data

- Extremely low cost on object storage

- Optimal for ML/AI and exploratory analysis

- Scales to exabyte levels

Disadvantages

- No ACID transactions → risk of data inconsistency

- Without governance it becomes a "Data Swamp"

- Slow query performance

- Row-level updates and deletes are difficult

Representative tools: AWS S3, Azure Data Lake Storage, Google Cloud Storage + Apache Spark

Best fit: Large-scale unstructured data for ML/AI training; exploratory data analysis (EDA); low-cost long-term data archiving

Data Swamp warning: A data lake without governance quickly becomes a swamp where nobody knows what data exists or who put it there. Data catalogs and governance policies must be established on day one, not as an afterthought.

2-3. Data Lakehouse — The Best of Both Worlds

The dominant data architecture of the 2020s. A Lakehouse combines the low cost and flexibility of a data lake with the performance and governance of a data warehouse through an open table format metadata layer.

Object storage (S3/GCS)

+

Open table format metadata layer (Iceberg / Delta / Hudi)

↓

BI queries ↔ ML training ↔ Streaming ↔ Real-time analytics (one platform)

Four core capabilities

- ACID transactions: Reliable reads and writes even on massive datasets

- Schema evolution: Add or modify columns without breaking existing workflows

- Time travel: Query a snapshot of data at any past point in time

- Multi-engine support: Spark, Trino, Flink, Snowflake, and BigQuery can all read from and write to the same table

By 2026, many large enterprises have adopted Lakehouse as their default architecture. For teams building a new data platform from scratch, it has effectively become the standard starting point.

Representative platforms: Databricks Lakehouse, Microsoft Fabric, AWS Lake Formation + Glue + Athena

Comparison table

| Attribute | Data Warehouse | Data Lake | Data Lakehouse |

|---|---|---|---|

| Data types | Structured | All types | All types |

| Schema approach | Schema-on-Write | Schema-on-Read | Both |

| ACID support | ✅ Full | ❌ None | ✅ Full |

| Query performance | ⚡ Very fast | 🐢 Slow | ⚡ Fast |

| Storage cost | 💰💰💰 High | 💰 Low | 💰 Low |

| ML/AI support | △ Limited | ✅ Excellent | ✅ Optimal |

| Governance | ✅ Strong | △ Manual | ✅ Strong |

| Vendor lock-in | ⚠️ High | △ Medium | ✅ Low (open formats) |

| Streaming support | △ Limited | △ Possible | ✅ Excellent |

| Operational complexity | Low | Medium | Medium–High |

2026 practitioner advice: Most mature organizations don't pick just one. A hybrid approach — keeping the warehouse as the golden BI layer while using a Lakehouse as the foundation for AI/ML and exploratory analytics — is the pragmatic reality.

3. Medallion Architecture

The most widely used data organization pattern in Lakehouse environments. Data is managed across three quality tiers (medals).

Core principles

- Never modify Bronze: Data received from the source is preserved as-is. If a bug fix is needed, reprocess Silver and Gold.

- Unidirectional flow: Data moves Bronze → Silver → Gold only.

- Multiple Gold layers: Separate Gold layers for Sales, Marketing, and Finance can coexist.

A typical dbt project layout implementing Medallion:

4. Open Table Format Showdown

The enabling technology behind Lakehouses. By adding a metadata layer on top of Parquet files, these formats deliver ACID transactions, schema evolution, and time travel. Remember — they are metadata specifications, not storage formats.

Apache Iceberg

The format with the strongest adoption momentum in 2026. Its greatest strength is an engine-agnostic design: Spark, Trino, Flink, Presto, Snowflake, BigQuery, and Redshift can all read from and write to the same Iceberg table.

-- Query data as of yesterday at 3 PM

SELECT * FROM catalog.db.orders

TIMESTAMP AS OF '2026-04-16 15:00:00';

-- Query by specific snapshot ID

SELECT * FROM catalog.db.orders

VERSION AS OF 8954789234567;

Strengths: Unmatched multi-engine support; optimized for large table management (partition pruning, column statistics); large-scale adoption at AWS, Netflix, and Apple

Delta Lake

The format led by Databricks. It delivers peak performance within the Databricks ecosystem and has been open-sourced for use with external engines.

Strengths: Best performance in Databricks environments; mature DML support (UPDATE, DELETE, MERGE); powerful governance through integration with Unity Catalog

Apache Hudi

Specialized for streaming upsert processing. Excels at change data capture (CDC) workloads and near-real-time data ingestion. Backed by large-scale adoption references at Uber and Amazon.

Strengths: Optimal CDC and streaming upsert handling; efficient incremental processing pipelines

Format selection guide

5. Lambda vs Kappa — Choosing a Processing Architecture

Two contrasting philosophies for answering the question: "How should we handle batch and streaming?"

Lambda Architecture

Proposed by Nathan Marz (2011). Processes batch and streaming data in parallel and merges the results in a serving layer.

When to choose Lambda

- You need to analyze vast historical data alongside real-time data simultaneously

- You need the batch layer to correct errors introduced by the speed layer (dual safety net)

- In regulated domains (finance, healthcare) where accuracy matters more than speed

Disadvantage: The "Complexity Tax" of maintaining two separate codebases for batch and streaming is high. The same business logic must be written twice and two systems must be operated in parallel.

Kappa Architecture

Proposed by Jay Kreps (Apache Kafka co-creator, 2014) as a simplified alternative to Lambda. It eliminates the batch layer entirely — all data flows through streaming. An immutable log (Kafka) serves as the source of truth; historical reprocessing is done by replaying the log.

When to choose Kappa

- Operational simplicity is the top priority and you want a single codebase

- Real-time processing dominates: IoT, fraud detection, personalized recommendations

- Your team has solid stream processing expertise

Code base simplification is why companies like Shopify, Uber, and Twitter migrated from Lambda to Kappa.

Disadvantage: Reprocessing petabytes of historical data is still expensive. Kafka log retention design becomes critical.

Lambda vs Kappa decision matrix

| Criterion | Lambda | Kappa |

|---|---|---|

| Processing model | Batch + streaming in parallel | Streaming only (batch via log replay) |

| Code complexity | High (two codebases) | Low (single pipeline) |

| Accuracy | High (batch corrects stream) | Medium (depends on stream accuracy) |

| Latency | Medium | Low |

| Historical reprocessing | Easy via batch layer | Possible via log replay |

| Operational burden | Two systems to run | Single system |

| Ideal domain | Finance, large-scale analytics | IoT, fraud detection, recommendations |

2026 trend: Apache Flink now handles both batch and streaming in a single engine, blurring the boundary between the two architectures. A "Converged Architecture" that captures the strengths of both Lambda and Kappa is an increasingly realistic choice.

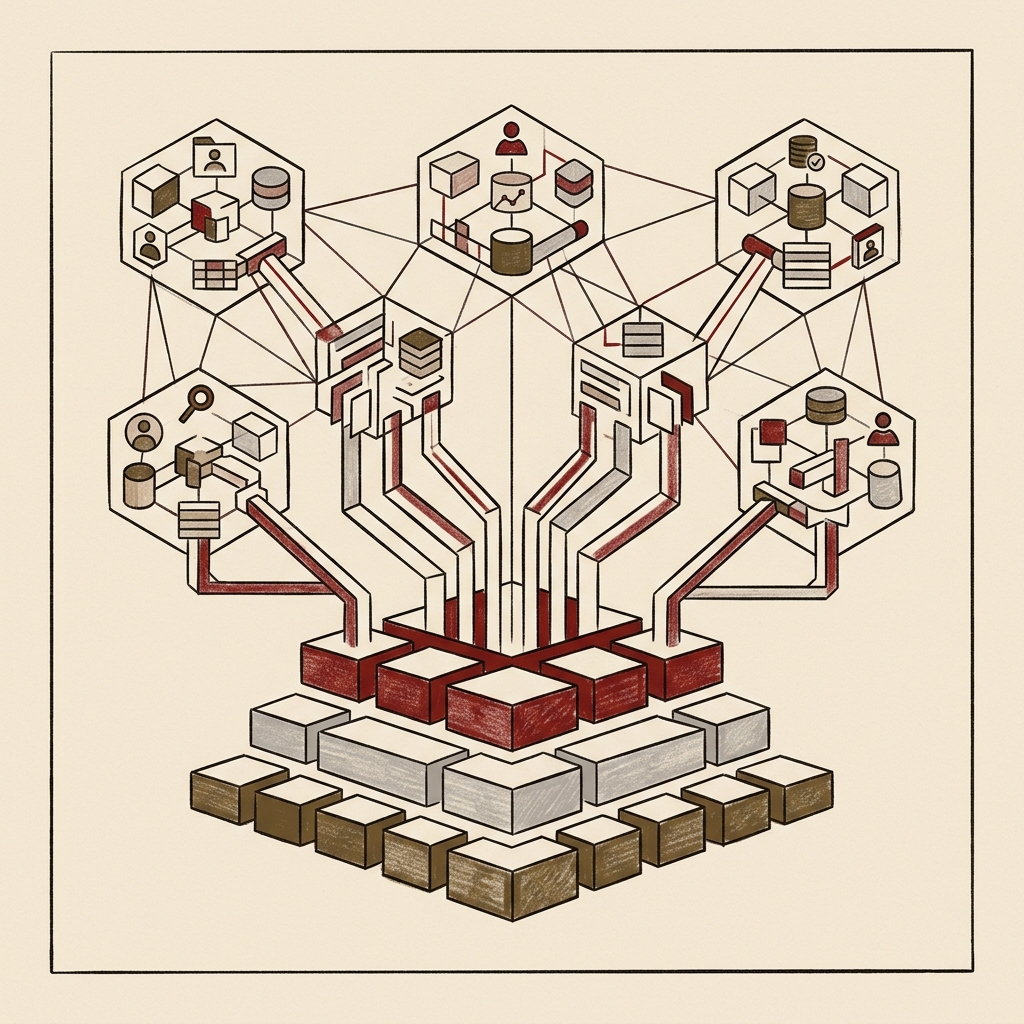

6. Data Mesh — The Distributed Ownership Paradigm

Why Did Data Mesh Emerge?

A centralized data team becomes a bottleneck as the organization grows. When the marketing team wants their own data, they must submit a request to the central data engineering team and wait weeks. The central team is expected to understand every domain but in practice understands none of them deeply.

Data Mesh — proposed by Zhamak Dehghani (2019) — deconstructs the monolithic data platform into domain-owned units, much as microservices decomposed the monolithic application.

"Think of it as moving from a restaurant with a central kitchen serving all food, to a food court where each domain operates its own kitchen."

Four Core Principles

| Principle | Description |

|---|---|

| 1. Domain ownership | Each business domain (Sales, Marketing, Logistics) owns and manages its own data |

| 2. Data as a product | Domain data is served to other teams like an internal API, including quality SLAs, documentation, and versioning |

| 3. Self-serve platform | A platform that lets domain teams build pipelines without central team involvement |

| 4. Federated governance | Domains retain autonomy while enterprise-wide standards, security, and compliance are enforced through shared policy |

Example: Domain Data Products

Data Mesh Adoption Roadmap

Expect a pilot to take 3–6 months; an organization-wide rollout requires 12–24 months.

Phase 1 (Months 0–3): Select pilot domains

- Identify 2–3 pilot domains with clear boundaries and measurable outcomes

- Embed data engineering expertise within domain teams

- Stand up a basic self-serve platform (Databricks Unity Catalog, AWS DataZone, etc.)

Phase 2 (Months 3–9): Standardize data products

- Define cross-domain Data Contracts

- Establish federated governance policies (metadata, access control, quality SLAs)

- Measure pilot outcomes and templatize learnings

Phase 3 (Months 9–24): Scale org-wide

- Build a reusable pattern library

- Roll out incrementally to all remaining domains

When Data Mesh Is NOT the Right Choice

According to Gartner, only 18% of organizations have sufficient governance maturity. Avoid rushing into Data Mesh adoption if any of the following apply:

- Your organization is small with fewer than three domains

- Domain teams lack embedded data engineering capability

- There is no strong C-level sponsorship for organizational change

- Your central data team is already struggling to operate effectively

Core lesson: Data Mesh succeeds or fails on culture and organizational change, not on technology. Thoughtworks' 2026 analysis found that successful organizations focused on sustainable organizational patterns rather than architectural perfection.

7. Data Fabric vs Data Mesh

These two approaches are frequently confused, but their philosophies are fundamentally different.

| Attribute | Data Fabric | Data Mesh |

|---|---|---|

| Approach | Technology-driven (automation, AI metadata) | Organization-driven (domain ownership) |

| Governance | Centralized and automated | Federated and distributed |

| Existing infrastructure | Adds a layer on top of existing systems | Reconstructs around domain boundaries |

| Adoption difficulty | Relatively low | High (requires organizational change) |

| Market size | $3.1B (2025) → $12.5B (2035) | Difficult to measure (org paradigm) |

| Best fit | Legacy-heavy organizations with limited capacity for change | Multi-domain enterprises with mature technical capabilities |

The 2026 reality: Rather than choosing one or the other, many leading companies take a hybrid approach — using Data Fabric as an intelligent infrastructure automation layer while simultaneously applying Data Mesh's domain ownership model.

8. Architecture Decision Flowchart

9. Practical Checklist

Answer every question below before locking in your architecture.

Storage architecture

- Have you defined whether your primary query pattern is BI/reporting or ML/exploratory analytics?

- Do you need to handle unstructured data (images, text, logs)?

- Have you evaluated open table formats to minimize vendor lock-in?

- Do you need storage and compute to scale independently?

- Must you support a multi-engine environment (Spark + Trino + Flink)?

Processing architecture

- Is your maximum acceptable latency (Latency SLA) defined?

- How frequently will you need to reprocess historical data?

- Does your team have dedicated stream processing expertise?

- Are you prepared to maintain separate codebases for batch and streaming?

Organizational architecture (when evaluating Data Mesh)

- Do you have 3+ business domains, each an independent data producer?

- Can domain teams build and own embedded data engineering capability?

- Is there C-level or above sponsorship for the organizational change?

- Can you build a shared platform for federated governance?

- Do you have a mechanism to define and enforce Data Contract standards?

Common

- Is your team large enough to handle the operational complexity of the chosen architecture?

- Have you estimated the Total Cost of Ownership (TCO) for each option?

- Do you have a data volume growth forecast for the next three years?

- Have you incorporated compliance requirements (GDPR, local data protection laws, etc.)?

Closing Thoughts

The five key messages from 2026 data architecture:

- Lakehouse is the default: Start new platforms with Lakehouse and design for coexistence with your existing warehouse.

- Open formats for freedom: Evaluate Apache Iceberg as your first choice. Multi-engine support and vendor independence are decisive.

- Processing is converging: The boundary between Lambda and Kappa is fading. Consider handling both workloads with Flink alone.

- Data Mesh is culture, not technology: Don't start without sufficient organizational capability and executive sponsorship.

- No architecture is perfect: The best architecture is the one that is "Good Enough" for your team's current capabilities and business needs.

The next part dives into building real data pipelines on top of this architecture — ETL/ELT design, orchestration, and streaming pipelines.

Part 3 preview: Practical Guide to Building Data Pipelines

- ETL vs ELT vs Zero-ETL: how to choose

- Apache Airflow / Dagster / Prefect orchestrator comparison

- Kafka + Flink streaming pipeline design

- dbt patterns and testing strategies in practice

- Pipeline monitoring & alerting design

Written: April 2026 | References: Monte Carlo Data, Databricks, IBM, Algoscale, DataMesh Architecture, Atlan, Flexera, Materialize

References

Use these documents when re-checking the technical claims and operational guidance in this article.