MongoDB ACID — Part 2: Atomicity & Consistency — from single-document updates to multi-document transactions

If Part 1 framed concepts and history, this post focuses on how Atomicity and Consistency are implemented in MongoDB: atomic updates within one BSON document, how commit-time visibility interacts with replication logs for multi-document transactions, and how to enforce rules with JSON Schema validation, unique indexes, and in-transaction checks. It separates atomicity from readConcern, writeConcern, and isolation semantics (reserved for Part 3), and adds practical notes on cross-shard overhead and idempotency when drivers retry transactions. Examples assume a recent MongoDB server and the official Node.js driver; always validate behavior against the manual for your deployment topology and version.

Series outline

- Part 1 — ACID concepts + MongoDB’s historical context

- Part 2 — Atomicity & Consistency in depth: single document vs multi-document (this post)

- Part 3 — Isolation levels and snapshot isolation internals

- Part 4 — Durability, WiredTiger, Write Concern (forthcoming)

- Part 5 — Production patterns, performance, anti-patterns (forthcoming)

Table of contents

- Introduction — boundaries of this post

- Atomicity — engineering “all or nothing”

- Single-document atomicity — the modeling baseline

- Multi-document transactions — commit-time visibility and the oplog

- What Consistency means here

- Enforcing consistency in MongoDB

- Transaction states — from active to committed

- Production-shaped code — when rollback wins

- Performance and operations — when transactions hurt

- Atomicity vs read semantics and deployment shape

- Closing — Part 2 summary

1. Introduction — boundaries of this post

If Part 1 outlined ACID and MongoDB’s evolution, this article goes deeper into A (Atomicity) and C (Consistency) from a storage-engine and application perspective.

One distinction matters up front. Atomicity (writes in a transaction either all land or all roll back) and isolation (what concurrent transactions can observe) are different axes. In MongoDB, read guarantees, replication lag, and snapshot reads are tied to readConcern, write acknowledgment to writeConcern, and concurrency semantics mainly to isolation (Part 3). So it is misleading to claim that “turning on multi-document transactions automatically fixes read consistency.” This post focuses on Atomicity and Consistency; isolation and read semantics continue in Part 3.

2. Atomicity — engineering “all or nothing”

Atomicity sounds abstract, but implementations are concrete. How does a database keep “all or nothing”?

Write-ahead logging (WAL)

Changes are logged before they become durable user data. In MongoDB, journaling and replication logs relate to this pattern. If a process crashes mid-flight, recovery can replay or roll back using the log.

MVCC-style versioning

Instead of overwriting rows in place, writers accumulate versions and commit publishes a coherent view. WiredTiger uses MVCC.

One sentence to carry through this post: Atomicity prevents half-applied writes for a transaction boundary; which snapshot a reader sees is governed by separate settings and isolation behavior.

3. Single-document atomicity — the modeling baseline

MongoDB treats updates to one BSON document—fields, arrays, nested sub-documents—as an atomic unit: they apply fully or not at all.

3.1 Why single-document updates are atomic

Updates to a single document interact with WiredTiger locking/MVCC so other operations do not observe intermediate field states inside that document.

// Conceptual document — one atomic unit

{

_id: ObjectId("..."),

userId: "user_001",

profile: {

name: "김개발",

email: "dev@example.com",

tier: "premium"

},

stats: {

loginCount: 142,

lastLogin: ISODate("2026-04-09"),

totalPurchase: 580000

},

recentOrders: [

{ orderId: "ORD-001", amount: 45000 },

{ orderId: "ORD-002", amount: 120000 }

]

}

A single updateOne combining $set, $inc, and $push is atomic without an explicit transaction:

await db.collection("users").updateOne(

{ _id: userId },

{

$set: { "profile.tier": "vip", "stats.lastLogin": new Date() },

$inc: { "stats.loginCount": 1, "stats.totalPurchase": 85000 },

$push: { recentOrders: { orderId: "ORD-003", amount: 85000 } }

}

);

3.2 Limits of single-document atomicity

By contrast, work that spans multiple documents is not automatically atomic.

await db.collection("products").updateMany(

{ category: "electronics" },

{ $inc: { price: 1000 } }

);

updateMany applies per matching document; failures mid-run can leave some documents updated and others not. Each document update may be atomic, but the whole updateMany operation is not a single atomic transaction. If you need “all documents or none,” use a multi-document transaction or redesign into fewer documents.

4. Multi-document transactions — commit-time visibility and the oplog

In replica sets, the oplog is central to how multi-document transactions interact with replication.

4.1 What the oplog is

The oplog is a capped collection (typically local.oplog.rs) that records write operations so secondaries can apply the same changes as the primary.

4.2 Prefer “commit-time visibility” over “one oplog entry”

The shorthand “multi-document transaction = exactly one oplog entry” is not reliable across versions. Since MongoDB 4.2, large transactions may be split across multiple oplog entries. What matters operationally is that uncommitted changes are not visible as committed according to the rules of your deployment, and after commit, secondaries apply the transaction’s effects in a way consistent with your replication guarantees—always confirm details in the manual for your version.

A coarse timeline:

-

startTransaction()

WiredTiger holds a transaction context; uncommitted writes are invisible to other sessions per isolation settings. -

insert / update / delete

Only operations attached to the session participate. -

commitTransaction()

On success, effects become visible to readers as allowed by readConcern/isolation. -

abortTransaction()

Buffered changes are discarded; data returns to the pre-transaction view for that session.

4.3 Deployment prerequisites

Multi-document transactions require replica set or sharded cluster topologies. They are not supported on standalone mongod the way operators expect in production. Many teams run even a single-node replica set locally to exercise transactions.

mongod --replSet rs0 --port 27017 --dbpath /data/db

mongosh --eval "rs.initiate()"

4.4 Sharding overhead

Transactions that span multiple shards can incur coordination, locks, and latency. If your shard key routes transactional work across shards frequently, revisit data modeling and access paths; keeping related writes on one shard or inside one document reduces pain.

5. What Consistency means here

“Consistency” is often glossed as “data stays consistent.” In ACID, Consistency means the database remains in a valid state with respect to declared rules—schema constraints, invariants, and business rules—before and after each transaction.

Many business rules are not fully expressible in the database. Split what schema validation can enforce from what application code must verify.

6. Enforcing consistency in MongoDB

6.1 Schema validation (JSON Schema)

From MongoDB 3.6 onward, JSON Schema validation can reject invalid inserts/updates.

await db.createCollection("accounts", {

validator: {

$jsonSchema: {

bsonType: "object",

required: ["accountId", "balance", "currency"],

properties: {

accountId: { bsonType: "string" },

balance: {

bsonType: "number",

minimum: 0

},

currency: {

bsonType: "string",

enum: ["KRW", "USD", "EUR"]

}

}

}

},

validationAction: "error"

});

6.2 Unique indexes

Unique indexes prevent duplicate values—classic for double-booking scenarios.

await db.collection("reservations").createIndex(

{ userId: 1, slotDate: 1 },

{ unique: true }

);

6.3 Application checks inside a transaction

Complex rules are often enforced by read-then-write inside withTransaction. Pass the same session to every read and write or you break the transactional boundary.

async function transferFunds(db, fromId, toId, amount) {

const session = db.client.startSession();

try {

await session.withTransaction(async () => {

const accounts = db.collection("accounts");

const sender = await accounts.findOne({ _id: fromId }, { session });

if (!sender) throw new Error("Sender account not found");

if (sender.balance < amount) throw new Error("Insufficient balance");

const receiver = await accounts.findOne({ _id: toId }, { session });

if (!receiver) throw new Error("Receiver account not found");

await accounts.updateOne(

{ _id: fromId },

{ $inc: { balance: -amount } },

{ session }

);

await accounts.updateOne(

{ _id: toId },

{ $inc: { balance: amount } },

{ session }

);

await db.collection("transactions").insertOne(

{ from: fromId, to: toId, amount, ts: new Date() },

{ session }

);

});

} finally {

await session.endSession();

}

}

7. Transaction states — from active to committed

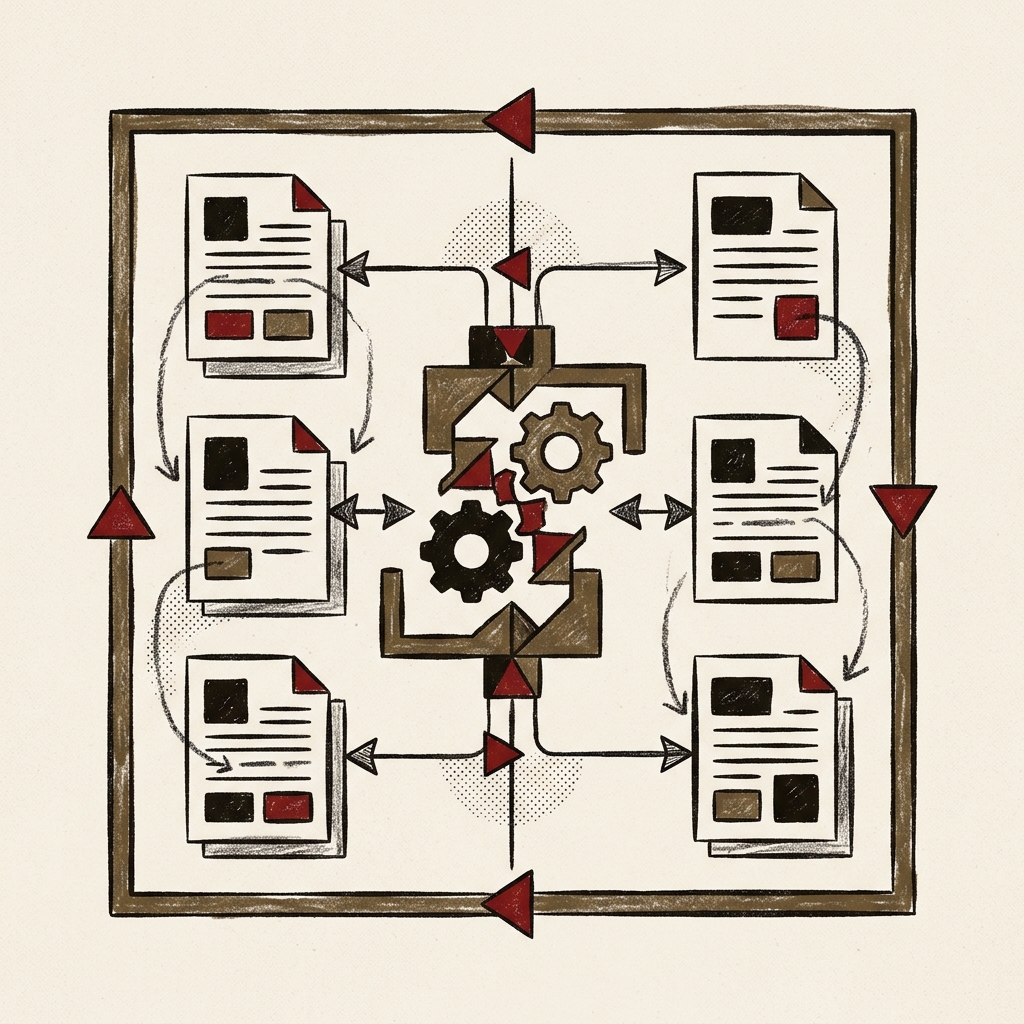

The following diagram is pedagogical, not a literal server state machine:

| Phase (informal) | Meaning |

|---|---|

| ACTIVE | Transaction in progress; reads/writes follow session + readConcern / isolation settings |

| COMMITTED | Commit finished; effects visible to readers as allowed |

| FAILED / ABORTED | Error or abort; rolled back from the client’s perspective |

8. Production-shaped code — when rollback wins

The following ties inventory decrement and order insert into one transaction. It targets MongoDB 6.x with the Node.js driver 6.x family. findOne plus conditional updateOne avoids driver differences in findOneAndUpdate return shapes; under high concurrency, consider atomic conditional updates or optimistic locking.

const { MongoClient } = require("mongodb");

const client = new MongoClient("mongodb://localhost:27017/?replicaSet=rs0");

async function createOrder(customerId, productId, quantity) {

const session = client.startSession();

try {

let orderId;

await session.withTransaction(

async () => {

const db = client.db("shop");

const orders = db.collection("orders");

const inventory = db.collection("inventory");

const inv = await inventory.findOne(

{ productId, quantity: { $gte: quantity } },

{ session }

);

if (!inv) {

throw new Error(`Insufficient stock: ${productId}`);

}

const dec = await inventory.updateOne(

{ _id: inv._id, quantity: { $gte: quantity } },

{ $inc: { quantity: -quantity } },

{ session }

);

if (dec.modifiedCount === 0) {

throw new Error(`Stock race: ${productId}`);

}

const orderInsert = await orders.insertOne(

{

customerId,

productId,

quantity,

status: "confirmed",

unitPrice: inv.price,

totalAmount: inv.price * quantity,

createdAt: new Date()

},

{ session }

);

orderId = orderInsert.insertedId;

await db.collection("customers").updateOne(

{ _id: customerId },

{

$inc: {

totalOrders: 1,

totalSpent: inv.price * quantity

},

$set: { lastOrderDate: new Date() }

},

{ session }

);

},

{

readConcern: { level: "snapshot" },

writeConcern: { w: "majority" }

}

);

return { success: true, orderId };

} catch (error) {

return { success: false, error: error.message };

} finally {

await session.endSession();

}

}

withTransaction commits on success and attempts abort on failure, and may retry on transient errors—read the driver docs for your version.

8.1 Retries and side effects

Retries can re-run the callback. Database writes remain subject to transactional atomicity, but side effects outside MongoDB (HTTP calls, message publishes) may execute more than once unless you design idempotency, outbox tables, or downstream deduplication.

9. Performance and operations — when transactions hurt

Transactions pay for locks, sessions, and logging.

9.1 WiredTiger cache and long transactions

Long transactions hold versions and locks; keep transactions short. Default transaction lifetime limits exist—treat them as guardrails.

9.2 Lock waiting

Hot documents under contention spend time waiting on locks—sometimes a signal to revisit schema and access patterns.

9.3 Single document vs multi-document

| Aspect | Single-document updates | Multi-document transactions |

|---|---|---|

| Overhead | Lower | Sessions, coordination, logging |

| Good fit | One document carries the invariant | Multiple collections/documents must move together |

| Design hint | Align document boundaries with business boundaries | Reduce shard hops and contention |

10. Atomicity vs read semantics and deployment shape

The flowchart below is a review aid, not an absolute decision tree.

In short:

- Atomicity stops half-successful writes for a transaction.

- Isolation and read concern shape what readers can see and when. Part 3 focuses there. Durability and write concern (how far a commit is replicated/persisted) continue in Part 4.

- Multi-document transactions need non-standalone deployments; sharded clusters need extra care for cross-shard paths.

11. Closing — Part 2 summary

Atomicity — single-document updates apply atomically to one BSON document; multi-document needs transactions or a modeling change. The “commits as one unit” intuition holds, but oplog splitting depends on version—verify in the manual.

Consistency — JSON Schema, unique indexes, and in-transaction checks enforce rules; many invariants remain application responsibilities.

Operations — read withTransaction alongside readConcern / writeConcern, and treat external side effects as non-transactional unless you add idempotent design.

Part 3 ties Isolation, snapshot reads, concurrency anomalies, and read concern together with fail-on-conflict and retries. The durability side of write concern (replication/persistence) picks up in Part 4.

References

- MongoDB Manual — Atomicity and write operations

- MongoDB Manual — Transactions

- MongoDB Manual — Transactions in applications

- MongoDB Manual — Replica set oplog

- MongoDB Manual — Schema validation

- MongoDB Manual — Transactions in sharded clusters

- MongoDB 4.2 release notes — Transactions